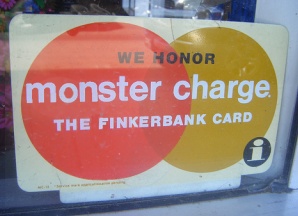

Credit card companies push around gobs and gobs of data every single day, and MonsterCharge (where Mike worked) was no different. However, the one thing that set MonsterCharge apart from many other credit card companies was the sheer volume of data that they worked with on a daily basis. The production environment had tiers, the test environment had tiers, heck, the tiers even had sub-tiers!

Credit card companies push around gobs and gobs of data every single day, and MonsterCharge (where Mike worked) was no different. However, the one thing that set MonsterCharge apart from many other credit card companies was the sheer volume of data that they worked with on a daily basis. The production environment had tiers, the test environment had tiers, heck, the tiers even had sub-tiers!

Nearly a year after the new system was deployed, the total size of all managed data stored on their Oracle databases had swelled to nearly 40 terabytes, and with good reason. Close to 3,200 concurrent users generated a continuous 1,200 transactions per second and sometimes spiking close to more than 2,000 on some days. The "Mission Accomplished" banner had been long ago hung on the wall and all the project managers received cushy bonuses and some even got promotions.

Our Dog Food Tastes Best (unless someone else makes it)

However, in reality, all was not well in MonsterCharge-ville. If the super-stellar farm of servers that processed transactions was their prodigal son, the replication server was their red-headed stepchild. Since financial transactions are serious business, the data needed to be well protected and any inbound transactions were written to a hot standby and a disaster recovery site. Due to the shear level of data, the system architects felt that Oracle's solution was not robust enough and was just too darn expensive. So, unlike the rest of the project that relied upon vendor supplied modules, they felt it best to create their own replication system. From scratch.

The good news was, just like Jurassic Park, the credit card company spared no expense in the development of the replication system and the overall end result did work. However, also just like Jurassic Park, throughout the project, the outside vendor who created their replication system had been positioning themselves to be as irreplaceable as possible. Shortly after go-live,the number and type of errors that were showing up in the replication system pointed to the fact that quality was kept at such a level as to guarantee overall functionality, but provide a steady stream of billable hours. After a month of weary arguing with the vendor over the difference between what 99.9% and 99.999% uptime meant in the original spec, MonsterCharge dropped the vendor and moved support in-house.

Failure to Replicate

With the outside vendor out of the picture, uptime of the replication system was on the rise and management couldn't have been more pleased for sending the vendor and their smarmy development practices to the curb. Most of the problems they were finding were usually minor and generally easy to track down, but after about six months of fighting fires, the entire replication system came to a screeching and completely dead halt late during batch processing one night.

Figuring it to be a one-time fluke, the server support team bounced the Oracle instance and everything was up and running again...until it crashed an hour later, then thirty minutes later, and finally, once every ten minutes. Ultimately, it took six hours of constant restarting to complete the replication of one night's worth of batch transactions. The development team and management were stymied. Was it a "time bomb" left by the disgruntled vendor? A virus? At this point, anything including a cosmic rays was a possibility. With no detailed reports available and needing to manually sift through hundreds of thousands of daily transactions not being the most efficient method of diagnosing what went wrong, the decision was made to improve the replication system's logging and wait for the next time it failed.

The next night, sure enough, the replication system came down hard during nightly processing. The logs showed that the incoming data at the time was vanilla at best, but, every time a transaction was processed for a hair dresser, and new customer of MonsterCharge – Lydia's Hair Update in Melbourne – the server crashed. Was the single quote in the name causing an issue? Impossible. The offending SQL at the time was using the company's unique ID number. Then, by chance, someone noticed that the counter of attempted data changes on the Production versus the replication server was close, but not equal. The counts on the replication server were higher by about a dozen.

Since a higher number on the replication server meant more updates were executed there than in Production, Production's detailed logs were reviewed (there was actually a system-wide error report) and the culprit was quickly found. To find what is supposed to be sent over to the replication server, the Production server scans all executed SQL statements for DML transactions (INSERTs, UPDATEs, DELETEs) and ...erm...replicates... the transactions on the replication server.

As it turned out, the Achilles' heel was a "feature" to handle the possibility of nested DML statements. The developers on the Production side of things prevented the replication process from looking for nested statements and breathed a sigh of relief that at least nobody had named their business after reading a certain XKCD cartoon.